Instagram has announced that it has made changes to how it handles deleting a user’s accounts for posting things like hate speech, nudity, bullying, and other inappropriate content. Users who are in danger of having their accounts removed will now get a warning notification.

Under our existing policy, we disable accounts that have a certain percentage of violating content. We are now rolling out a new policy where, in addition to removing accounts with a certain percentage of violating content, we will also remove accounts with a certain number of violations within a window of time. Similarly to how policies are enforced on Facebook, this change will allow us to enforce our policies more consistently and hold people accountable for what they post on Instagram.

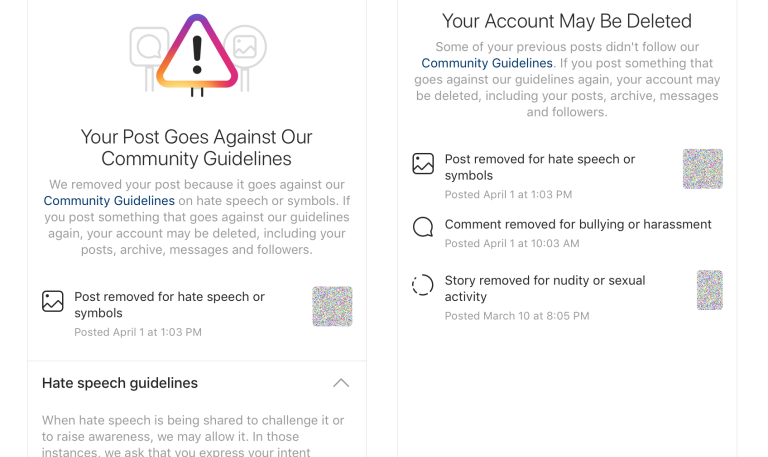

We are also introducing a new notification process to help people understand if their account is at risk of being disabled. This notification will also offer the opportunity to appeal content deleted. To start, appeals will be available for content deleted for violations of our nudity and pornography, bullying and harassment, hate speech, drug sales, and counter-terrorism policies, but we’ll be expanding appeals in the coming months. If content is found to be removed in error, we will restore the post and remove the violation from the account’s record. We’ve always given people the option to appeal disabled accounts through our Help Center, and in the next few months, we’ll bring this experience directly within Instagram.

Users will now see their accounts deleted if they make a certain amounts of violations in a specific timeframe. Instagram will now send notifications to accounts that are in danger of being deleted, with the option to appeal the violations.