A new report from more than a dozen cybersecurity researchers says Apple’s CSAM prevention plans, and the EU’s similar proposals, represent “dangerous technology,” that expands the “surveillance powers of the state.”

Apple has postponed the release of its child protection features, due to concerns from security experts. Researchers say similar plans from Apple and the European Union represent a national security issue.

The report (via The New York Times) was published by more than a dozen cybersecurity researchers. Researchers say they wanted to warn the EU about this “dangerous technology.”

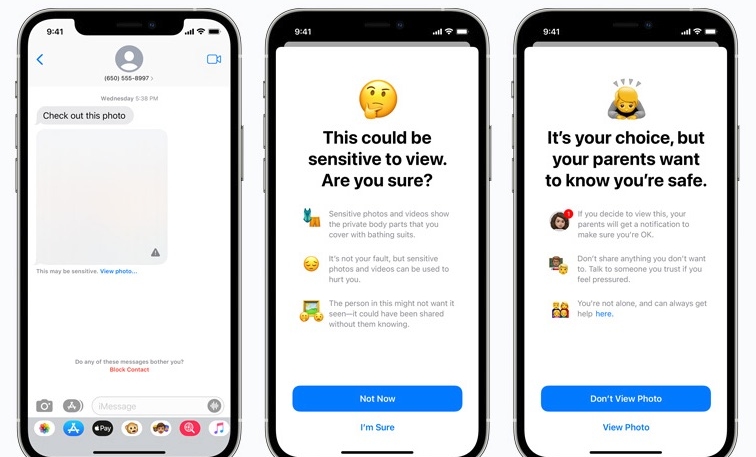

Apple has plans to eventually put in place a suite of tools that the Cupertino firm says will help protect children from the spread of child sexual abuse material (CSAM). One part of the system would block suspected harmful images from appearing in Messages. The other part of the system will automatically scan images stored in iCloud.

Apple’s plan is similar to one that is already used since 2008 by Google to scan Gmail for harmful images. However, privacy groups have become more concerned following Apple’s announcement of its scanning plans, as they believe Apple’s system could lead to governments demanding to access the scanning technology for political purposes.

The report’s authors believe that the EU plans to put in place a system that would work similarly to Apple’s to scan for child abuse images. However, the EU plan goes further, also scanning for criminal and terrorist activity.

“It should be a national-security priority to resist attempts to spy on and influence law-abiding citizens,” the researchers wrote in the report seen by the New York Times.

“It’s allowing scanning of a personal private device without any probable cause for anything illegitimate being done,” said the group’s Susan Landau, professor of cybersecurity and policy at Tufts University. “It’s extraordinarily dangerous. It’s dangerous for business, national security, for public safety, and for privacy.”

Apple has not offered any comment on the report.

(Via AppleInsider)