A New York Times report tells reader about the challenges Apple faces in policing its App Store, and also highlights a website called App Danger Project, which attempt to help parents monitor the apps that may be installed on their offspring’s devices, warning them if they seem dangerous. The website uses a machine algorithm to flag dangerous apps.

The App Danger Project currently lists 182 apps across Apple’s App Store and Google’s Play Store that have been flagged as having “at least some reviews indicating dangerousness.”

The list can be filtered by platform. Filtering the results to only Apple results in a list containing 146 apps.

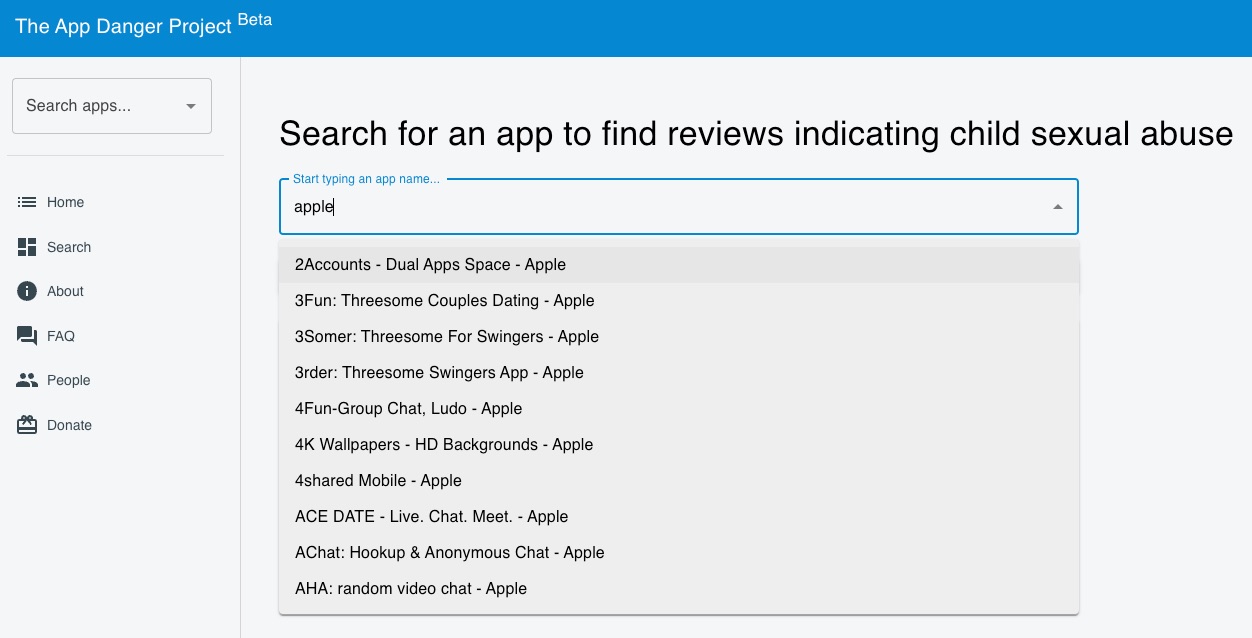

The website also offers a search tool parents can use to find apps from both app stores and view selected reviews. Reviews returned in the search results include those that mention child porn, pedophiles, and other indicators that the app is being used to exploit children.

A search for Snapchat returns 23 reviews “that indicate that this app is unsafe for children.” A search for Instagram returns zero flagged reviews. A search for TikTok shows that the app is not yet in the App Danger Project database.

The NYT reports Apple removed 10 apps from the App Store after investigating some of the apps on the App Danger Project list.

Apple also investigated the apps listed by the App Danger Project and removed 10 that violated its rules for distribution. It declined to provide a list of those apps or the reasons it took action.

“Our App Review team works 24/7 to carefully review every new app and app update to ensure it meets Apple’s standards,” a spokesman said in a statement.

For more information and background information on the App Danger Project, read the New York Times article.