Apple has quietly removed all mentions of CSAM from its Child Safety webpage, indicating that it may have given up on its controversial plan to scan for child sexual abuse images on iPhones and iPads. The feature has faced significant criticism from security researchers and others.

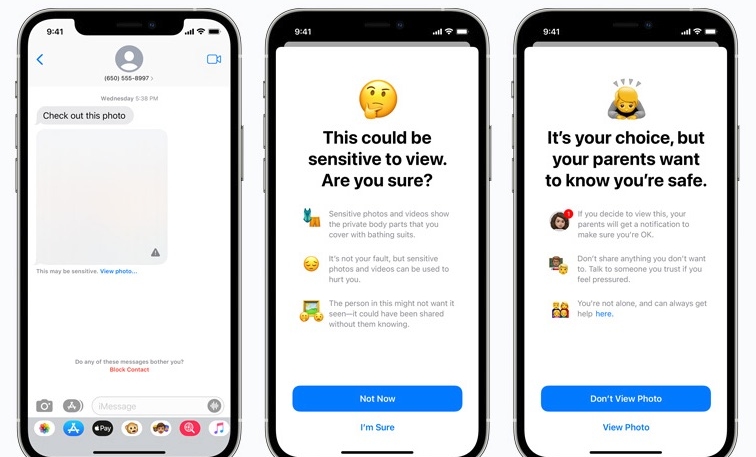

Apple in August announced its planned new suite of child safety features, which included a feature to scan users’ iCloud Photos libraries for Child Sexual Abuse Material (CSAM). The Messages app will use on-device machine learning to warn about sensitive content

The new communication tools were designed to allow parents to play a more informed role in helping their children navigate the world of online communication.

The announcement was met with criticism from a variety of individuals and organizations, with many security researchers – including The Electronic Frontier Foundation (EFF), Edward Snowden, and numerous other security-minded folks, even Apple Employees.

Much of the criticism was aimed at Apple’s planned on-device CSAM detection. Researchers said the feature relied on dangerous technology, which bordered on surveillance. The feature was also criticized for being less than effective in identifying child sexual abuse images.

Apple rolled out a publicity blitz in an attempt to reassure its critics and users by releasing new FAQs, providing interviews with Apple executives, and more.

However, the controversy has continued to dog Apple. The Cupertino firm rolled out the Communication Safety features for Messages earlier this week alongside the release of iOS 15.2. It held back on the rollout of CSAM, possibly indicating the news feature may be dead in the water.

Apple said its decision to delay was “based on feedback from customers, advocacy groups, researchers and others… we have decided to take additional time over the coming months to collect input and make improvements before releasing these critically important child safety features.”